KITTI-360 Dataset

- 07 October 2020

- Tübingen

- Autonomous Vision

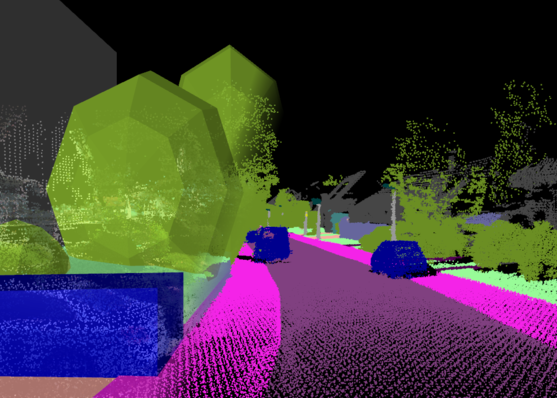

We have released the KITTI-360 dataset, a large-scale dataset with rich sensors, accurate localisations and comprehensive annotations! It aims to foster research in new important research areas relevant to the computer graphics, computer vision and robotics community.

We present a large-scale dataset that contains rich sensory information and full annotations. We recorded several suburbs of Karlsruhe, Germany, corresponding to over 320k images and 100k laser scans in a driving distance of 73.7km. We annotate both static and dynamic 3D scene elements with rough bounding primitives and transfer this information into the image domain, resulting in dense semantic & instance annotations for both 3D point clouds and 2D images.

- Driving distance: 73.7 km, frames: 4x83,000

- All frames accurately geolocalized (OpenStreetMap)

- Semantic label definition consistent with Cityscapes, 19 classes for evaluation

- Each instance assigned with a consistent instance ID across all frames

For more information please visit our website.